Table of Contents

ToggleIntroduction

Site Reliability Engineering has moved from being a “big tech” practice to a business-critical capability for banks, telecoms, SaaS companies, healthcare providers, manufacturers, retailers, and government digital services. In 2026, organizations are no longer asking whether they need SRE. They are asking why reliability remains difficult despite cloud platforms, DevOps pipelines, automation tools, observability dashboards, and AI-assisted operations.

The answer is simple but uncomfortable: many organizations have added more tools without reducing operational complexity. Teams still spend too much time on repetitive manual work, alerts are still noisy, incident ownership is often unclear, and reliability metrics are not always linked to customer impact.

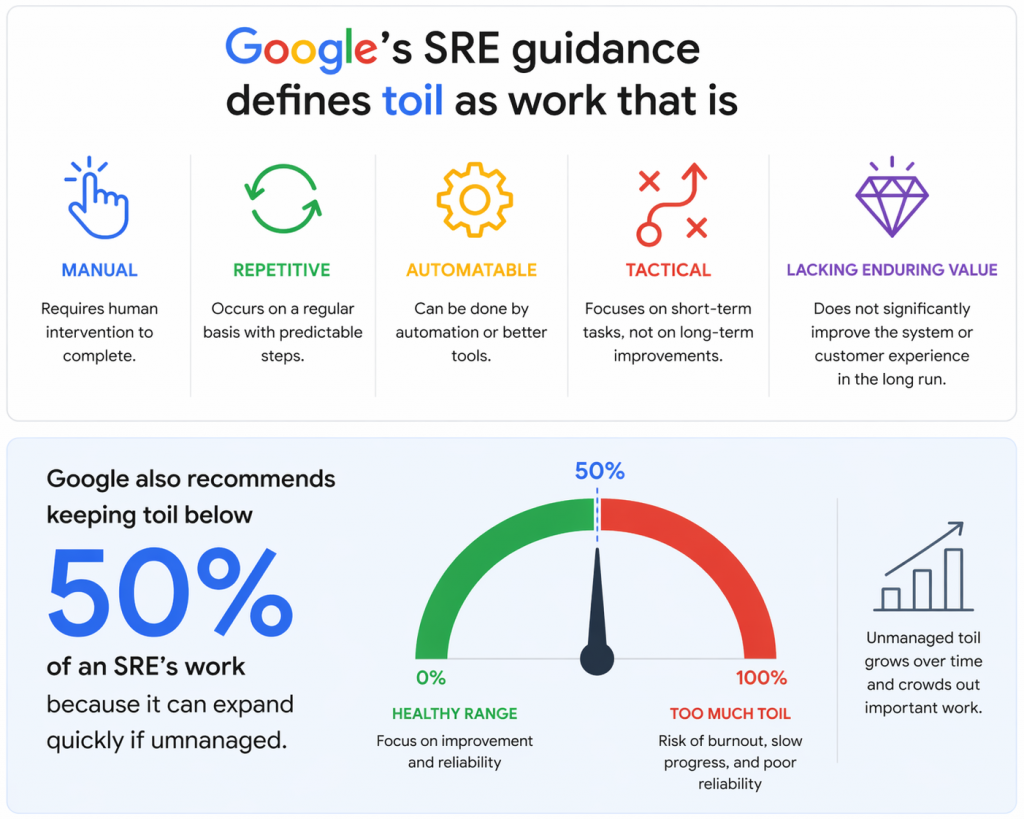

Google’s SRE guidance defines toil as work that is manual, repetitive, automatable, tactical, and lacking enduring value. Google also recommends keeping toil below 50% of an SRE’s work because it can expand quickly if unmanaged.

Source: Google’s SRE guidance defines toil

At the same time, observability tool overload is becoming a real challenge. Grafana Labs’ 2025 Observability Survey found that respondents cited 101 different observability technologies currently in use, showing how fragmented modern monitoring and reliability ecosystems have become.

This blog explains the top SRE challenges in 2026 and provides practical solutions that organizations can use to reduce toil, simplify tools, improve service reliability, and build stronger SRE capability.

Why SRE Matters More in 2026

Digital products now operate across cloud, hybrid infrastructure, microservices, APIs, containers, AI systems, and third-party platforms. One failed dependency can affect thousands of users in minutes. For enterprises, reliability is no longer just an IT metric. It directly affects revenue, brand trust, regulatory compliance, customer experience, and employee productivity.

SRE helps organizations balance speed and stability by combining software engineering, operations, automation, observability, incident response, and service-level thinking. PeopleCert’s official SRE Foundation page positions SRE as a way to combine development and operations for efficient, reliable, and secure large-scale applications.

However, adopting SRE is not the same as hiring a few SRE engineers or buying observability tools. Real SRE maturity requires better engineering habits, measurable SLOs, reduced manual operations, clear incident processes, and leadership support.

Table: Top SRE Challenges in 2026 and Business Impact

| SRE Challenge | What It Looks Like | Business Impact | Practical Fix |

|---|---|---|---|

| Toil overload | Manual deployments, repetitive checks, ticket-based approvals | Slower delivery, burnout, poor morale | Automate repeatable work and track toil hours |

| Tool overload | Too many dashboards, monitoring tools, and alert sources | Higher cost, confusion, missed signals | Consolidate tools and standardize observability |

| Alert fatigue | Duplicate, false, or low-value alerts | Missed incidents, delayed response | Tune alerts around SLOs and customer impact |

| Weak SLO practices | Teams track uptime but not user experience | Poor reliability decisions | Define SLIs, SLOs, and error budgets |

| Incident ownership gaps | Teams debate responsibility during outages | Longer MTTR and customer disruption | Create clear escalation and response models |

| Cloud complexity | Hybrid and multi-cloud environments with unclear visibility | Operational risk and cost leakage | Use full-stack observability and platform engineering |

| Skills gap | DevOps teams lack SRE depth | Inconsistent reliability practices | Build structured SRE training and certification pathways |

Challenge 1: Toil Still Consumes Too Much Engineering Time

Toil is one of the biggest enemies of SRE maturity. It includes repetitive tasks such as restarting services, clearing disk space, manually reviewing alerts, applying routine configuration changes, creating access tickets, or running scripts that should already be automated.

A small amount of operational work is normal. The problem starts when repetitive work becomes the default way of running systems. When engineers spend most of their day handling tickets and incidents, they have little time left for automation, architecture improvement, capacity planning, chaos testing, or reliability engineering.

Google’s SRE guidance states that reducing toil is central to the “engineering” part of Site Reliability Engineering. The purpose is not simply to keep systems running, but to make systems easier to operate as they scale.

How Organizations Can Fix Toil

Organizations should start by measuring toil instead of guessing it. Every SRE or operations team can classify weekly work into categories such as manual incident response, repetitive service requests, automation work, reliability projects, deployment support, and documentation.

Once measured, leaders can identify high-volume tasks that should be automated first. Good automation candidates are tasks that are frequent, predictable, low-risk, and rule-based. Examples include certificate renewal alerts, environment provisioning, service restarts, log collection, capacity threshold checks, and standard rollback actions.

The goal should not be “automate everything.” The better goal is to remove repetitive work that prevents engineers from solving higher-value reliability problems.

Challenge 2: Tool Overload Is Creating More Noise Than Clarity

Many enterprises now use separate tools for logs, metrics, traces, synthetic monitoring, incident response, ticketing, cloud cost monitoring, security events, APM, infrastructure monitoring, and user experience monitoring. This creates a major problem: teams may have more data but less clarity.

Grafana’s 2025 Observability Survey highlights tool overload as a major industry theme and reports that 101 different observability technologies were cited by respondents. New Relic’s 2025 Observability Forecast also reported that organizations are actively reducing observability tool sprawl, with the average number of observability tools per organization dropping 27% since 2023.

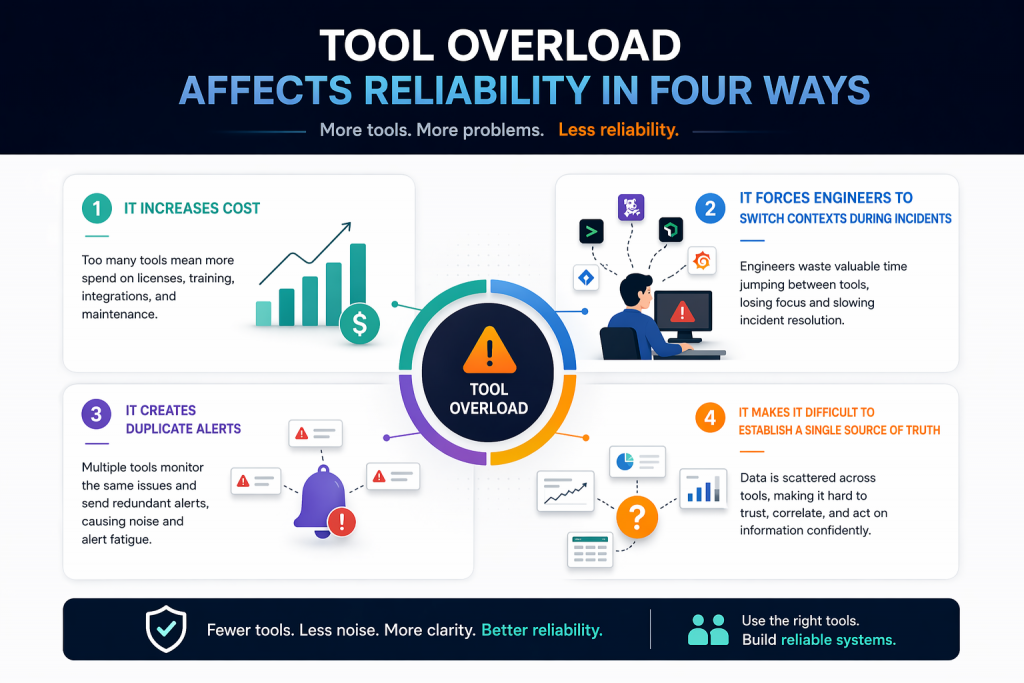

Tool overload affects reliability in four ways. First, it increases cost. Second, it forces engineers to switch contexts during incidents. Third, it creates duplicate alerts. Fourth, it makes it difficult to establish a single source of truth.

How Organizations Can Fix Tool Overload

Enterprises should conduct an observability tool audit every six months. The audit should answer five questions:

| Question | Why It Matters |

|---|---|

| Which tools are used daily? | Identifies core platforms |

| Which tools duplicate functionality? | Reduces cost and noise |

| Which tools support SLO reporting? | Connects observability to reliability |

| Which tools slow down incident response? | Improves MTTR |

| Which tools are required for compliance? | Avoids risky removal |

The ideal observability strategy is not necessarily one tool. It is a connected system where alerts, traces, logs, metrics, incidents, ownership, and business impact can be understood quickly.

Challenge 3: Alert Fatigue Is Causing Missed Incidents

Alert fatigue happens when teams receive too many alerts, too many false positives, or too many alerts without clear action. Over time, engineers stop trusting alerts. That is dangerous because critical signals may get ignored during real incidents.

Recent reporting based on Splunk’s 2025 observability findings noted that 75% of UK IT teams experienced downtime due to missed critical alerts, while tool sprawl, false alerts, and alert volume contributed to stress and missed signals.

Alert fatigue is not only a technical issue. It is also a human issue. Engineers under constant alert pressure face stress, context switching, sleep disruption, and burnout. Reliability suffers when teams are always reacting but rarely improving the system.

How Organizations Can Fix Alert Fatigue

Alerts should be designed around user impact, not internal noise. A good alert should meet three conditions:

- It indicates a real or near-real customer-impacting issue.

- It has a clear owner.

- It includes an action or runbook.

Low-priority alerts should move to dashboards or reports. Duplicate alerts should be grouped. Alerts without action should be removed. Teams should also review every major incident and ask: “Which alert helped us, which alert distracted us, and which alert was missing?”

Challenge 4: SLOs Are Still Poorly Defined

Many organizations claim to use SRE but do not define strong Service Level Objectives. They track uptime, CPU usage, memory consumption, or ticket counts, but they do not always measure what customers actually experience.

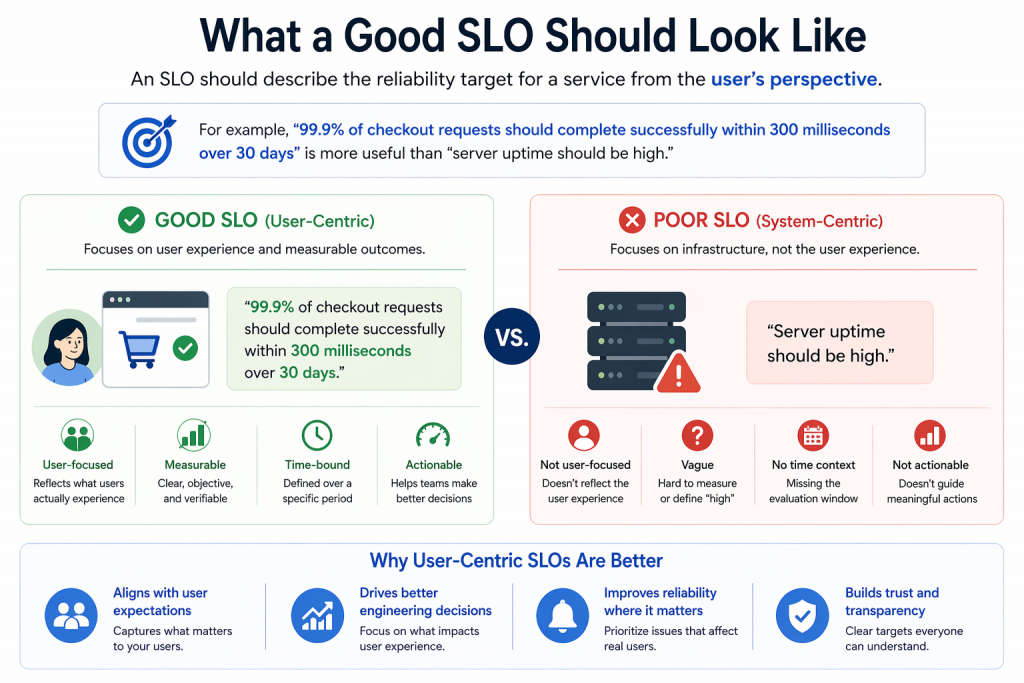

An SLO should describe the reliability target for a service from the user’s perspective. For example, “99.9% of checkout requests should complete successfully within 300 milliseconds over 30 days” is more useful than “server uptime should be high.”

Without SLOs, teams struggle to make trade-offs. Product teams push features, operations teams push stability, and leadership pushes speed. Error budgets help resolve this conflict by making reliability measurable.

Example: Weak Metrics vs Strong SRE Metrics

| Weak Metric | Better SRE Metric |

|---|---|

| Server uptime | Successful user transactions |

| CPU usage | Request latency experienced by users |

| Number of incidents | Customer-impacting incident minutes |

| Ticket closure rate | Time to detect and restore service |

| Deployment count | Change failure rate and rollback rate |

Challenge 5: Incident Response Is Still Too Reactive

In many organizations, incident response depends on heroics. A few experienced engineers know how systems work, where logs are stored, which service owns what, and whom to call. This may work for small teams, but it fails at enterprise scale.

A mature SRE organization creates repeatable incident processes. It defines severity levels, escalation paths, incident commander roles, communication templates, stakeholder updates, post-incident reviews, and action tracking.

The best SRE teams do not treat incidents as blame events. They treat them as learning opportunities. Every incident should improve the system, the process, or the team’s knowledge.

Practical Incident Response Improvements

| Area | Improvement |

|---|---|

| Detection | Alert on SLO burn rate, not just infrastructure thresholds |

| Ownership | Map every service to a team and escalation contact |

| Communication | Use incident channels and stakeholder update templates |

| Recovery | Maintain tested rollback and failover procedures |

| Learning | Run blameless post-incident reviews |

| Prevention | Track corrective actions until closure |

Challenge 6: AI and Automation Are Helpful but Not Magic

AI is becoming part of reliability engineering through AIOps, incident summarization, anomaly detection, log analysis, and automated root cause suggestions. PeopleCert’s official training material updates note that SRE Practitioner v1.3 added content around GenAI in automation, Value Stream Management platforms, Platform Engineering, and AIOps.

However, AI will not fix poor reliability foundations. If alerts are noisy, ownership is unclear, telemetry is incomplete, and runbooks are outdated, AI may only accelerate confusion. AI works best when organizations already have clean observability data, clear service maps, strong SLOs, and disciplined incident processes.

How to Use AI in SRE Responsibly

Organizations can start with low-risk use cases such as incident summarization, alert grouping, runbook recommendations, anomaly detection, and post-incident report drafting. Human approval should remain mandatory for high-risk actions such as production changes, failovers, security responses, and customer-impacting automation.

Challenge 7: SRE Skills Are Not Growing Fast Enough

SRE requires a blend of skills: software engineering, Linux, cloud platforms, networking, observability, incident management, automation, DevOps, security, resilience engineering, and stakeholder communication. Many organizations expect DevOps engineers or system administrators to “become SREs” without structured development.

This creates inconsistent implementation. One team may focus on monitoring, another on automation, another on incident response, and another on cloud operations. Without a common framework, SRE becomes a job title instead of a capability.

PeopleCert’s SRE Foundation certification introduces SRE principles, SLOs, error budgets, toil reduction, automation, and observability. Its SRE Practitioner certification focuses on applying SRE culture, automation, observability, secure resilient systems, and scalable reliability practices.

Practical SRE Roadmap for Organizations in 2026

| Stage | Focus Area | Key Actions |

|---|---|---|

| Month 1 | Assess reliability maturity | Identify critical services, current incidents, tools, toil, and ownership gaps |

| Month 2 | Define SLOs | Build SLIs, SLOs, and error budgets for top business services |

| Month 3 | Reduce alert noise | Remove duplicate alerts and tune alerts around customer impact |

| Month 4 | Automate toil | Automate repetitive tickets, checks, deployments, and recovery tasks |

| Month 5 | Improve incident response | Create incident commander roles, runbooks, and review templates |

| Month 6 | Build SRE capability | Train teams through SRE Foundation and Practitioner learning paths |

| Ongoing | Mature reliability culture | Use error budgets, chaos testing, platform engineering, and continuous improvement |

Real-World Example: Fixing a Reliability Gap

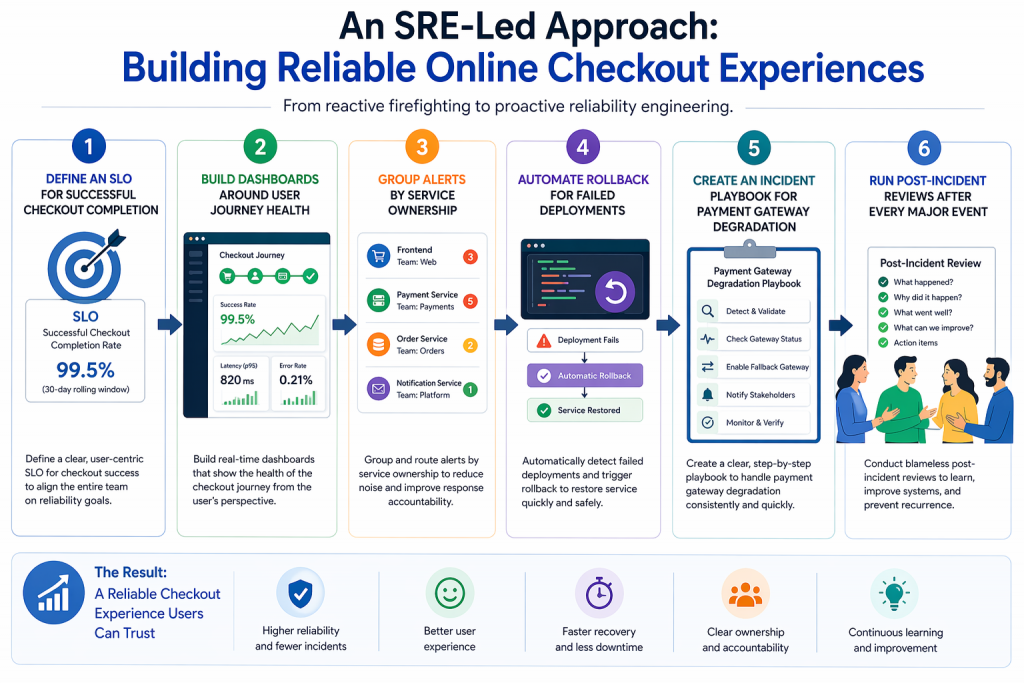

Imagine an e-commerce company facing repeated checkout failures during campaign days. The team has monitoring tools, but alerts come from six platforms. Developers blame infrastructure. Infrastructure teams blame third-party payment APIs. Business leaders only see revenue loss.

An SRE-led approach would change the operating model. First, the team defines an SLO for successful checkout completion. Second, they build dashboards around user journey health. Third, they group alerts by service ownership. Fourth, they automate rollback for failed deployments. Fifth, they create an incident playbook for payment gateway degradation. Finally, they run post-incident reviews after every major event.

The result is not just fewer outages. The organization gains better decision-making, faster recovery, cleaner ownership, and stronger customer trust.

FAQs

1. What are the biggest SRE challenges in 2026?

The biggest SRE challenges in 2026 include toil, tool overload, alert fatigue, weak SLO adoption, unclear incident ownership, cloud complexity, and shortage of skilled SRE professionals across enterprise technology teams.

2. How can organizations reduce toil in SRE teams?

Organizations can reduce toil by measuring repetitive work, automating predictable tasks, improving runbooks, removing unnecessary approvals, using self-service platforms, and allowing SRE teams to focus on engineering improvements instead of manual operations.

3. Why is tool overload a problem for SRE?

Tool overload creates duplicate alerts, higher costs, slower troubleshooting, dashboard confusion, and poor incident visibility. SRE teams need connected observability, not disconnected tools that increase noise during production incidents.

4. How do SLOs help improve reliability?

SLOs help teams define reliability from the customer’s perspective. They guide engineering priorities, error budgets, release decisions, incident reviews, and business conversations around acceptable risk and service performance.

5. Is SRE certification useful for professionals and enterprises?

Yes. SRE certification helps professionals understand reliability engineering, SLOs, toil reduction, observability, automation, and incident response. For enterprises, it creates a common SRE language across DevOps, cloud, platform, and operations teams.

Conclusion

SRE success in 2026 is not about adopting more tools—it is about reducing complexity, improving engineering discipline, and aligning reliability with real business outcomes. Organizations struggling with toil, tool overload, alert fatigue, and unclear SLOs must shift toward a structured reliability model that prioritizes automation, streamlined observability, and proactive incident management.

Enterprises that succeed with Site Reliability Engineering (SRE) are those that treat reliability as a product feature—not just an operational responsibility. This means defining service level objectives (SLOs) based on user experience, reducing manual intervention through automation, consolidating monitoring tools into unified observability platforms, and building strong incident response frameworks with clear ownership.

From a strategic perspective, the future of SRE lies in platform engineering, AIOps integration, and reliability-driven DevOps transformation. Organizations must invest in SRE certification training, SRE Foundation and Practitioner certifications, and enterprise-wide reliability culture to bridge skill gaps and ensure consistency across teams. When implemented correctly, SRE enables faster releases, lower downtime, improved customer satisfaction, and measurable business resilience.

For professionals and enterprises actively searching for solutions, focusing on high-impact areas like SRE best practices, reducing toil in DevOps, observability strategy, incident management frameworks, and reliability engineering certification will deliver long-term value and competitive advantage.